The News: Qualcomm Technologies, Inc., a subsidiary of Qualcomm Incorporated, announced the Qualcomm® Cloud AI 100, a high-performance AI inference accelerator, is shipping to select worldwide customers. Qualcomm Cloud AI 100 uses advanced signal processing and cutting-edge power efficiency to support AI solutions for multiple environments including the datacenter, cloud edge, edge appliance, and 5G infrastructure. The newly announced Qualcomm Cloud AI 100 Edge Development Kit is engineered to accelerate adoption of edge applications by offering a complete system solution for AI processing up to 24 simultaneous 1080p video streams along with 5G connectivity.

Analyst Take: Qualcomm announced the Cloud AI 100, its purpose-built chipset for AI inference and edge computing workloads at its AI Day conference last April. The implications at the time caught my attention, especially the idea of Qualcomm taking its AI capabilities beyond the smartphone and into the datacenter. While the initial information was somewhat limited, today’s announcement brings a lot of the details to light, including the company’s plans to roll out the Cloud AI 100 selectively throughout the fall of 2020 and to bring it GA 1H of 2021.

Cloud AI 100 Overview and Products

Qualcomm will be offering the Cloud AI 100 comes in three different iterations — DM.2e, DM.2, and PCIe (Gen 3/4) — which reflect a series of continuous improvements in performance, with nominal increases in power consumption.

To this, I break it into two categories. The lowest power, moderate throughput versions and the still very low power, but much greater throughput option.

In the first bucket, I put the Cloud AI 100 Dual M.2e and Dual M.2 models, which can hit between 50 TOPS (TOPS = trillion floating-point operations per second) and 200 TOPS respectively. These units are also capable of attaining 10-15k inferences per second while consuming less than 50 watts. An impressive feat.

The PCIe model, which is the top of the Cloud AI 100 food chain as it pertains to performance, achieves up to 400 TOPS, according to details released with the launch. The PCIe hovers around 25,000 inferences at 50 to 100 watts. Slightly more power hungry, but still very low power against dome of the “Big GPUs” out there.

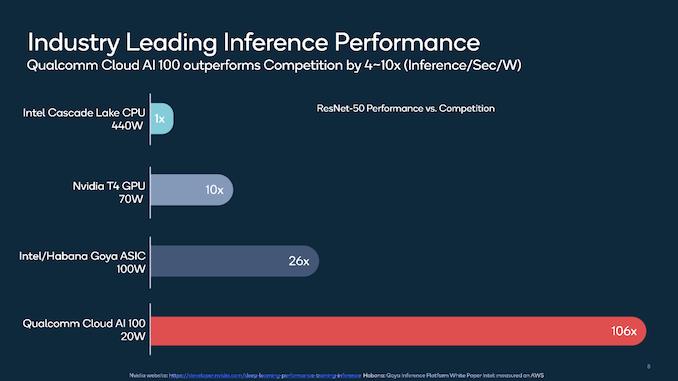

All three ship with up to 16 AI accelerator cores paired with up to 144MB RAM (9MB per core) and 32GB LPDDR4x on-card DRAM. Qualcomm’s specs claim that this configuration enables the Cloud AI 100 to outperform the competition by 106 times when measured by inferences per second per watt, using the ResNet-50 algorithm. An impressive feat for Qualcomm, for its first offering in this space.

According to details released, the Cloud AI 100, which is manufactured on a 7-nanometer process, shouldn’t exceed a power draw of 75 watts. Here’s the power and performance breakdown for each card:

- Dual M.2e: 15 watts, 70 TOPS

- Dual M.2: 25 watts, 200 TOPS

- PCI2: 75 watts, 400 TOPS

Developer Friendly is a Big Focus for Qualcomm with the Cloud AI 100

Knowing that winning the hearts and minds of developers will be important, Qualcomm is focusing on making sure that the new AI focused offerings will be friendly for the developer community. The announcement addressed this specifically sharing that the Cloud AI 100 and products it powers will support a full range of developer tools, including compilers, debuggers, profilers, monitors, servicing, chip debuggers, and quantizers. This will extend to support runtimes like ONNX, Glow, and XLA, and machine learning frameworks such as TensorFlow, Baidu’s PaddlePaddle, PyTorch, Keras, MXNet, and Microsoft’s Cognitive Toolkit for applications like speech recognition, language translation, and computer vision.

Overall Impressions of the Qualcomm Cloud AI 100 launch

I believe the Cloud AI 100 provides a significant runway to deliver AI beyond the smartphone at scale. That isn’t to say the company won’t face competition in doing so, but the low power, high performance nature makes the Cloud AI 100 a substantial opportunity at the edge, where the product could be used in 5G edge boxes, 5G equipment and of course in the datacenter as well. This will enable use cases in manufacturing, retail, health care, smart cities and more.

Further, I feel that Qualcomm is well positioned to be a strong contender in the low power AI space addressing needs from edge to cloud. The company most certainly benefits from its substantial investments in R&D and its experience delivering on-device AI through its Snapdragon platform.

It’s early days for Qualcomm and the Cloud AI 100, but this is a good start and represents a new opportunity for Qualcomm to leverage its deep R&D and engineering experience to extend its contributions to practical AI use cases that continue to gain market momentum.

Futurum Research provides industry research and analysis. These columns are for educational purposes only and should not be considered in any way investment advice.

Read more analysis from Futurum Research:

Amazon Hiring Spree Continues With September 16 Career Day

NVIDIA Announces Arm Acquisition and it’s a Really Big Deal!

Oracle Returns to Annualized Growth in its Fiscal Q1 as Cloud Grows

Images: Qualcomm

The original version of this article was first published on Futurum Research.

Daniel Newman is the Principal Analyst of Futurum Research and the CEO of Broadsuite Media Group. Living his life at the intersection of people and technology, Daniel works with the world’s largest technology brands exploring Digital Transformation and how it is influencing the enterprise. From Big Data to IoT to Cloud Computing, Newman makes the connections between business, people and tech that are required for companies to benefit most from their technology projects, which leads to his ideas regularly being cited in CIO.Com, CIO Review and hundreds of other sites across the world. A 5x Best Selling Author including his most recent “Building Dragons: Digital Transformation in the Experience Economy,” Daniel is also a Forbes, Entrepreneur and Huffington Post Contributor. MBA and Graduate Adjunct Professor, Daniel Newman is a Chicago Native and his speaking takes him around the world each year as he shares his vision of the role technology will play in our future.