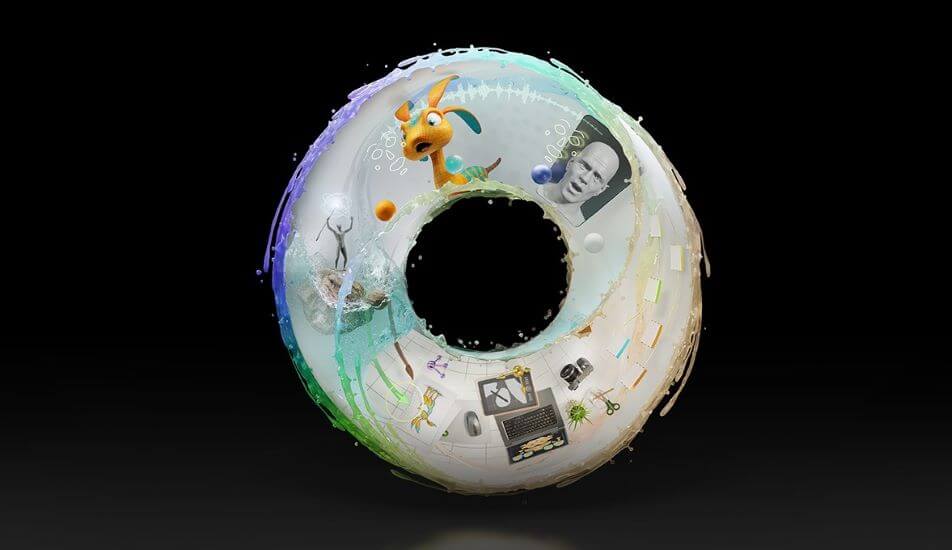

The News: At CES this week, NVIDIA announced NVIDIA Omniverse is now available for free for NVIDIA Studio creators using GeForce RTX and NVIDIA RTX GPUs. The company also announced additional updates to Omniverse Machinima and Omniverse Audio2Face that include new features and ecosystem updates. NVIDIA Omniverse is designed to be a real-time 3D virtual simulation platform that is a metaverse for engineers. Read the full announcement on the NVIDIA blog.

NVIDIA Omniverse Now Available for Free for Millions of Creators

Analyst Take: Until now NVIDIA Omniverse has been in beta, but at CES this week the company announced to simulation platform is now available to creators. Billed as the metaverse for engineers, this platform has been downloaded by almost 100,000 creators according to NVIDIA — and with good reason. These creators have been able to improve workflows and productivity with the various technologies that exist in the platform like core rendering, physics and AI.

NVIDIA has invested millions of dollars into the Omniverse focusing on engineering simulations and 3D collaboration, which differs widely from the other metaverse products in the market today that focus more on meeting collaboration. Having the ability to create and collaborate on simulations for robotics, automotive, engineering, manufacturing, and other typically hands-on disciplines will go a long way in our hybrid collaboration world.

All of this investment and focus on the immersion of our digital and physical worlds is why I chose NVIDIA as a likely 2022 winner in the “Metaverse” mega trend for investors in a recent MarketWatch Op-ed.

Details about NVIDIA Omniverse Plus New Features

NVIDIA Omniverse connects graphics, AI, simulation and scalable compute in a single platform to enhance 3D workflows. Other features including some newly announced ones also include:

- Omniverse Nucleus Cloud: This new feature enables easy collaboration of large 3D scenes whether people are in the same office or across the globe. It’s similar to collaborating in a cloud document, but for a 3D simulation or model.

- Updated Omniverse Ecosystem: By expanding support for 3D marketplaces and digital access libraries, creators in the Omniverse are able to build and develop what they desire much easier. TurboSquid by Shutterstock, CGTrader, Sketchfab and Twinbru have released thousands of Omniverse-ready assets for creators. Others will soon follow according to the announcement.

- Omniverse Machinima for RTX Creators: The gamer community, which makes up a significant portion of NVIDIA’s base, now have access to new, free character, objects and environments from leading games in the Machinima library. Creators can use these features and bring them into their own scenes and developments.

- Omniverse Audio2Face: Giving creators can depict and 3D face with just and audio track was revolutionary, but NVIDIA didn’t stop there. Now with additional AI features, creators can avoid the manual blend-shaping process and focus on other endeavors.

Immersive Connected Virtual World is Growing

The metaverse has been a hot button topic for many organizations in the tech world for the last few months, and I expect this to be a topic that will continue to build momentum throughout 2022 and beyond. NVIDIA is contributing to the growing trend by making NVIDIA Omniverse widely available, but as I said before, this metaverse platform is unlike any other and has a great opportunity to be one of the leaders in the future. The potential use case scenarios for creative industries that need to improve hybrid collaboration are almost limitless at this point. Blending the physical world with the digital world is happening and NVIDIA is leading the charge.

Disclosure: Futurum Research is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Other insights from Futurum Research:

Making Markets EP17: Zoom, NVIDIA, Dell, All Goes Well, Even When the Market Disagrees

NVIDIA Announced its Self-Driving Tool Kit Will be Ready for Cars by 2024

Image Credit: NVIDIA

The original version of this article was first published on Futurum Research.

Daniel Newman is the Principal Analyst of Futurum Research and the CEO of Broadsuite Media Group. Living his life at the intersection of people and technology, Daniel works with the world’s largest technology brands exploring Digital Transformation and how it is influencing the enterprise. From Big Data to IoT to Cloud Computing, Newman makes the connections between business, people and tech that are required for companies to benefit most from their technology projects, which leads to his ideas regularly being cited in CIO.Com, CIO Review and hundreds of other sites across the world. A 5x Best Selling Author including his most recent “Building Dragons: Digital Transformation in the Experience Economy,” Daniel is also a Forbes, Entrepreneur and Huffington Post Contributor. MBA and Graduate Adjunct Professor, Daniel Newman is a Chicago Native and his speaking takes him around the world each year as he shares his vision of the role technology will play in our future.