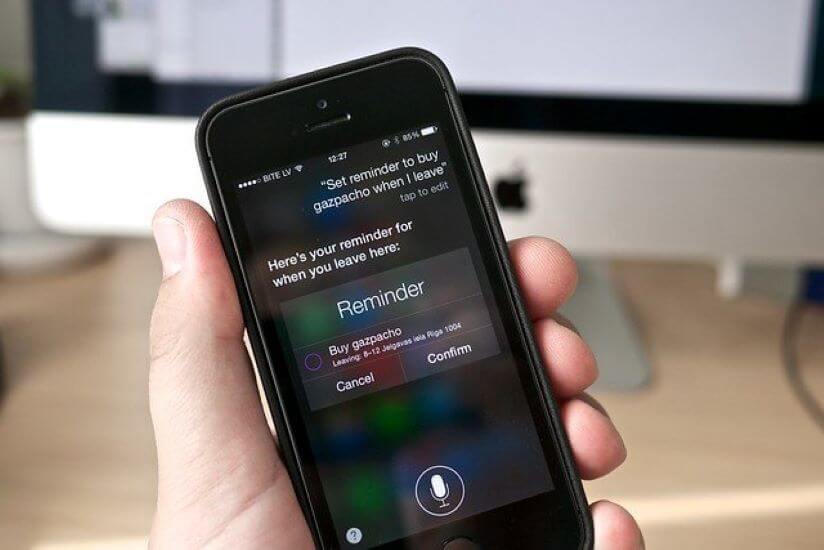

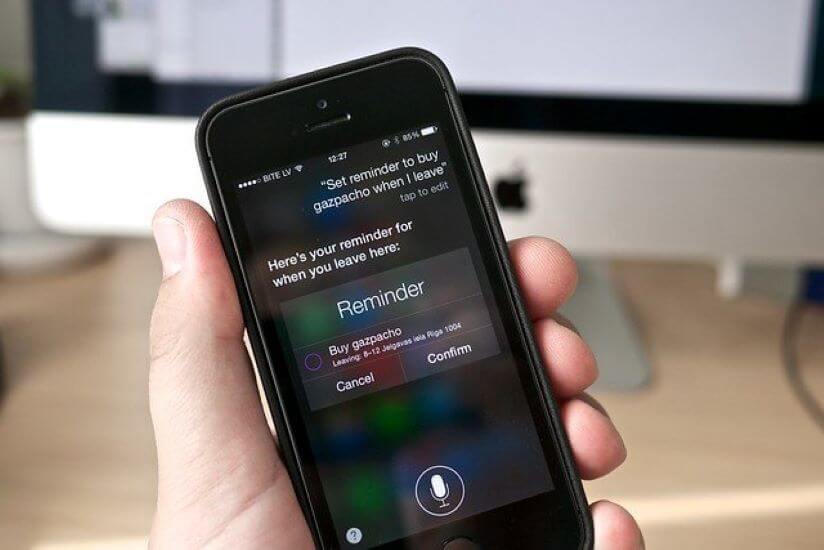

In response to concerns raised by a Guardian story last week over how recordings of Siri queries are used for quality control, Apple is suspending the Siri grading program world wide. Apple says it will review the process that it uses, called grading, to determine whether Siri is hearing queries correctly, or being invoked by mistake.

In addition, it will be issuing a software update in the future that will let Siri users choose whether they participate in the grading process or not. Read the story on Tech Crunch.

Analyst Take: I’m not one to spread unwarranted love on Apple, but this u-turn is a smart move for the company to show they are serious about their commitments to privacy while also potentially using the decision to differentiate itself from competition that hasn’t yet made material changes to their machine learning training strategy, which includes 3rd party contractors listening to anonymized conversations with digital assistants/chatbots.

I’m torn on the topic as a whole, because I realize to enable the type of experience that consumers seek with our AI powered tools like Siri, Alexa, Cortana and others, there needs to be human/machine interactions that include human’s validating the training process. The more data utilized in the training set, the more likely that the user experience will be optimized and embraced. The problem is in order to get the larger training set, there is a requirement for volumes of data from a wide net of users; likely more than a typical beta program. This means companies need to deploy the power of their user base. However, this is where the grey area of opt-in and approval becomes important.

For anyone that has thought their AI powered digital assistants aren’t listening, I want to remind you that in order to activate, they need to hear a wake word. This means they are always listening because in order to hear the wake word, they need to listen to everything else you are saying until the wake word is triggered. Now, how it handles the data it hears in that listening phase (collect, store, delete, analyze) should be disclosed and likely deleted. However, it likely isn’t being deleted, and if you didn’t think these tools listened until you woke them, then rethink the process and you should see the light.

Overall, pausing the Siri grading program until they can clearly achieve their privacy commitments was a smart move by Apple. In the next iteration they will allow people to opt-in (or maybe manually opt-out), but this is a step in the right direction for Apple.

More from Futurum:

Quick Overview of Intel’s new 10th generation “Ice Lake” CPUs for laptops.

5G Ready for the Great Indoors? Ericsson and Swisscom Showing the Way

Qualcomm Earnings Show A Soft Quarter, But 5G Growth is Coming Soon

Image Courtesy of Flickr

The original version of this article was first published on Futurum Research.

Daniel Newman is the Principal Analyst of Futurum Research and the CEO of Broadsuite Media Group. Living his life at the intersection of people and technology, Daniel works with the world’s largest technology brands exploring Digital Transformation and how it is influencing the enterprise. From Big Data to IoT to Cloud Computing, Newman makes the connections between business, people and tech that are required for companies to benefit most from their technology projects, which leads to his ideas regularly being cited in CIO.Com, CIO Review and hundreds of other sites across the world. A 5x Best Selling Author including his most recent “Building Dragons: Digital Transformation in the Experience Economy,” Daniel is also a Forbes, Entrepreneur and Huffington Post Contributor. MBA and Graduate Adjunct Professor, Daniel Newman is a Chicago Native and his speaking takes him around the world each year as he shares his vision of the role technology will play in our future.